Grain - The devil is in the detail

Technorati Tags: BI, Business Intelligence, OLAP, SQL server 2008

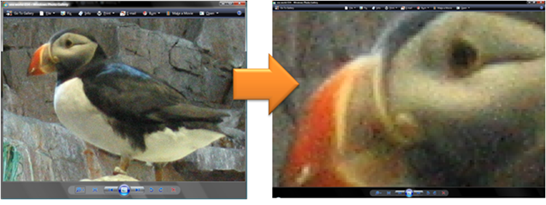

Business Intelligence is just like digital photography, the more detail you want the more space you are going to use and in both cases we talk about grain and granularity. If you have ever worked with RAW files on a 'Prosumer' (an IT Professional with as back ground in Japanese wrestling?) camera you will know that they are 3-4 times the size of the equivalent JPEG file and so most us (except James O'Neill on our team) just use JPEG to save some of our hard disks for work.

That's great for all the paparazzi, but what about business intelligence professionals? We are required to capture every transaction in the business in great detail and hold all of the historical information back to the dawn of computing. In the data warehouse this data explosion can test the database server in two key ways, it takes ever longer to add more data in and critically, query performance gets worse and worse. So what's the answer?

Reduce the amount of data held

Don't be tempted to aggregate the data as it is loaded into the data warehouse i.e. keep the grain of the information as detailed as possible from the source system. This is especially true if the data warehouse is going to hold history that isn't being held in the source system .

This is stating the obvious, but push back on some of the requirements e.g. is all that history really necessary to the success of the project? In retail, a rolling 106 weeks of information will give this week this year, this week last year and this week 2 years ago, as well as this year to date and last year to date which is usually enough. If not, think about reducing the grain of information for historical data that is available on line. For example instead of holding the exact date of a sale, aggregate sales to the week level and think about whether it is necessary to hold historical data at the product level where products come and go and the key trend is how a group of products performs. Also in this category is carefully typing the data in the data warehouse - fact tables should have bigint, int , smallint or even tinyint foreign surrogate keys , plus measures/ facts only.

Large dimensions such as customer need careful planning and data typing as well. All columns should be 'not null' for query performance and char and nchar should only be used where the length of the data never varies.

A good tip for the time dimension is to use a primary key in the form YYYYMMDD, but stored as an integer. you can apply logic to this without going back to the data dimension all the time.

OLAP

As I mentioned yesterday, OLAP is designed to be fast, but you would think that with all of the pointers, data and calculations that it would be orders of magnitude larger than the warehouse it came from and further aggravate the storage issue. However the space overhead issue is not as bad as it appears and there are two reasons for this:

- The data warehouse will need to be extensively indexed to return reasonable response times to users' many and diverse queries.

- A good olap provider will have sophisticated compression algorithms and in my experience the olap database is usually less than half of the size of the warehouse it came from, and yes I am referring to SQL Server Analysis Services.

Compression

Of course if you can compress the data in an OLAP database surely the same data could be compressed in the source database.This useful feature will only be available in SQL server 2008 onwards and it's designed very much with large fact tables in mind. The keen eyed among you will have noticed it's not in the the latest CTP4 release of the beta and I will be posting more on compression when it's available for download.

Partitions

Enterprise edition SQL Server support partitions for both relational and OLAP data. The idea is to create a new partition from the latest load of the data and then add this partition to the rest of the partitions that comprise a table or cube data to speed up loading of new information. Partitions also help improve query performance either by limiting a query to just one partition such as the most recent month's data.

Technorati Tags: BI, Business Intelligence, Data Warehouse, OLAP, SQL Server 2008